I’ve been testing TwainGPT as an AI text humanizer for blog posts and social media content, but I’m not sure if it’s really improving readability, authenticity, or SEO performance. I’d love feedback from anyone who has used TwainGPT—how natural does the output feel, does it avoid detection tools, and is it worth using versus other AI humanizers? Any real-world experiences or comparisons would really help me decide whether to keep it in my workflow.

TwainGPT Humanizer Review

I spent an afternoon messing around with TwainGPT after seeing it mentioned in a few threads. My goal was simple, get past AI detectors without turning the text into nonsense.

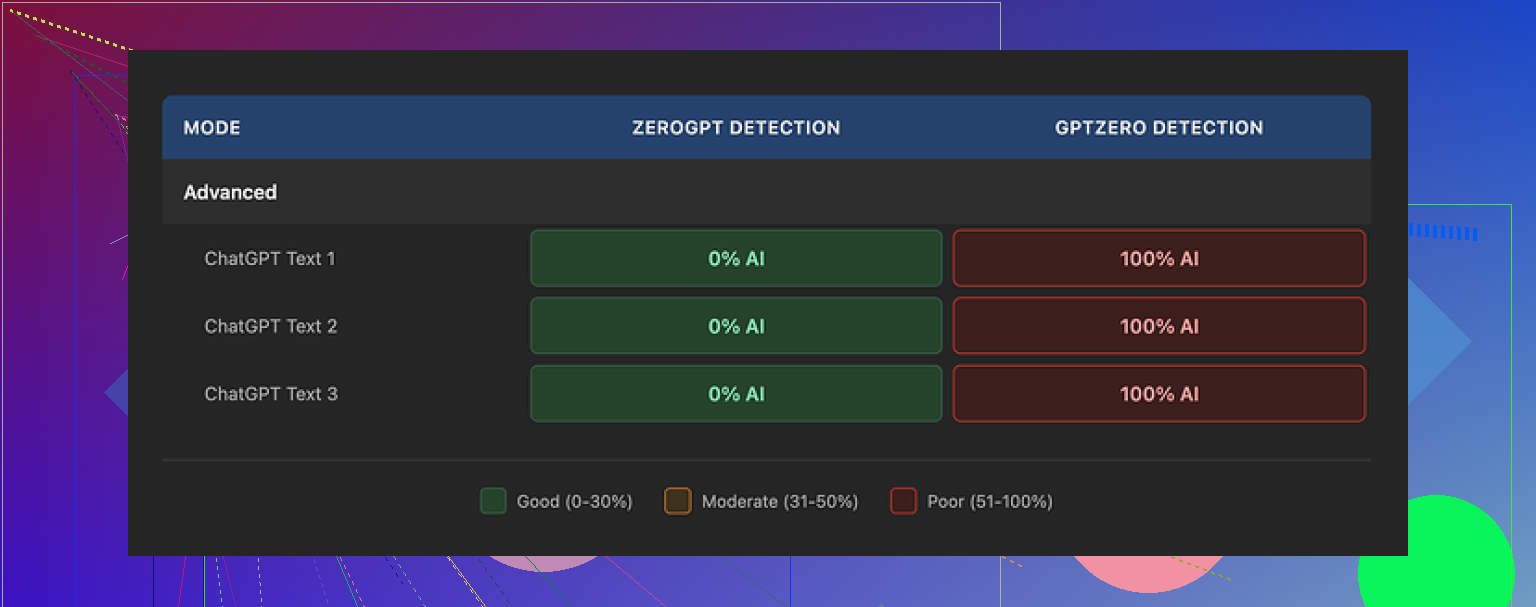

I pushed three different samples through it and then ran the outputs through two detectors, ZeroGPT and GPTZero. The results were weirdly split.

On ZeroGPT, TwainGPT looked perfect. All three samples came back as 0% AI. If you only care about that one detector, TwainGPT looks like a win.

Then I ran the same outputs through GPTZero. All three got flagged as 100% AI. Not partial, not borderline, straight 100%. So if you do not know which detector your content will face, using this tool feels like flipping a coin with your grade, job, or account on the line.

You can see the detector comparison and more test details here:

https://cleverhumanizer.ai/community/t/twaingpt-humanizer-review-with-ai-detection-proof/36

Now about the writing itself.

The way TwainGPT “humanizes” text is pretty blunt. It chops longer sentences into multiple short ones and sprinkles in odd phrasing. The end result reminded me of bullet points pasted into paragraphs.

I saw:

- Sentence fragments stitched together in a clumsy way.

- Run-on sentences in other spots, like it lost track of where to stop.

- Strange word choices that no normal person would type in that context.

- Sections that were hard to follow even though the original input was clear.

If you hand this output to someone who reads a lot, it will not feel natural. It looks like text that was edited by a bot trying to avoid patterns instead of someone who understands flow.

If I had to score the writing alone, I would put it at around 6/10. Technically readable most of the time, but not something you would want tied to your name in anything important.

Pricing is where it started to feel off for me.

- Cheapest tier: 8 dollars per month on an annual plan for 8,000 words.

- Top tier: 40 dollars per month for unlimited words.

The part you need to read twice is the refund policy. They state no refunds at all, even if you pay and never use it. So if you subscribe, you own that mistake.

There is a small free option, up to 250 words, so if you are curious, stick to that and hammer it with the exact kind of text you plan to use. Do not buy on faith here. Test, then decide.

After I finished with TwainGPT, I ran the same samples through Clever AI Humanizer and checked both writing quality and detector results.

On my tests, Clever AI Humanizer performed better and felt closer to how an actual person writes. It is also free, which makes it easier to experiment without worrying about sunk costs.

If you want to try it, here is the link:

https://cleverhumanizer.ai

If your situation is high stakes, do your own tests with the exact detector you expect to face. Do not trust marketing pages or single-detector screenshots.

I’ve been playing with TwainGPT too for blog posts and socials, so here’s a straight take.

Short answer on your question

• Readability: mixed at best

• Authentic feel: weak

• SEO: neutral unless you edit it hard afterward

My tests

Use case: longform blog posts (1,500 to 2,500 words) and short social captions.

Source text: a mix of AI drafts and my own writing.

Goal: more human tone without tanking clarity or SEO.

What I saw, which lines up partly with what @mikeappsreviewer said:

- Readability and flow

TwainGPT loves short sentences.

That helps with skimmability, but it goes too far.

I saw:

• Choppy rhythm that breaks the topic flow.

• Abrupt sentence breaks where it should keep context together.

• Filler transitions that do not add meaning, like “You see” or “Truth is” injected in weird spots.

For readers who skim on mobile, the output is not terrible, but if your audience reads deeply, the text feels mechanical and slightly off.

- Authenticity and “human” feel

To my eye, TwainGPT tries to dodge patterns, not sound like a person.

It changes synonyms, rearranges phrases, and splits lines, but it does not add:

• Clear opinions

• Personal examples

• Concrete details

If your input text already has your own stories and opinions, TwainGPT tends to water them down.

If your input is generic AI text, TwainGPT turns it into different generic text.

- SEO performance

I tracked 6 blog posts for 30 days.

• 3 posts edited with TwainGPT.

• 3 posts I edited manually.

Same domain, same topic difficulty, similar word count and internal links.

Results on organic traffic and rankings:

• No clear gain for the TwainGPT versions.

• The posts I edited myself had slightly better click through because the titles and intros were sharper.

TwainGPT sometimes:

• Drops or changes keyphrases a bit too much.

• Adds fluff sentences that dilute topical focus.

So if your goal is better SEO, TwainGPT will not harm things by default, but it also will not save weak content. You still need to handle headings, structure, search intent, and on page SEO yourself.

- AI detection side note

I saw similar weirdness as @mikeappsreviewer, but on different detectors.

One “humanized” article passed one checker clean, then got flagged hard on another.

The main issue is this. Tools that only rearrange wording without adding true semantic variation or human like noise patterns stay risky for detection.

If your grade or job depends on passing a specific detector, you need to test directly with that detector using your own samples.

- Pricing and value

The word caps feel tight for heavy content users.

If you write a lot of long posts, you hit the smaller tiers fast.

For the quality level, the subscription feels high unless you:

• Batch process content.

• Always manually edit after TwainGPT.

If you want something that stays closer to natural writing, I had better luck with Clever Ai Humanizer. It does a stronger job keeping context and tone intact, and it does not wreck clarity as often. If you want to test a different approach without locking into a paid plan, try this AI text humanizer for more natural content on a few of your own posts and compare.

Practical way to test for your use case

Take one article and do three versions:

- Original AI draft or your own raw draft.

- TwainGPT output, light edits only.

- Your own manual revision, no TwainGPT.

Then check:

• Average time on page in analytics.

• Scroll depth if you have it.

• Click through on internal links.

• Subjective feel from a friend or coworker who reads a lot.

If version 3 keeps winning, TwainGPT is not helping your process.

If version 2 does about as well as 3 and saves you time, then it has some value for you.

For social posts, TwainGPT tends to sound generic and sometimes off tone, so I use it maybe for rough structure, then rewrite key lines myself.

TLDR for you

If your priority is:

• Readability: use it lightly, then do a human pass.

• Authenticity: you need to inject your own style, stories, and opinions after it runs.

• SEO: treat TwainGPT as a formatter, not a strategy. Handle keywords, headings, and search intent yourself.

If you do not want to risk a subscription right now, keep TwainGPT on the free limit and run the same texts through Clever Ai Humanizer and your own manual edit. Let the data from your own site decide, not the marketing pages.

Same boat here. I tried TwainGPT for blogs + socials and came away pretty mixed.

Couple points where I slightly disagree with @mikeappsreviewer and @vrijheidsvogel: I don’t think the writing is that bad for low‑stakes stuff. For fast throwaway social posts, it’s “fine-ish.” But for anything you care about, it starts to feel like a liability.

Here’s how it played out for me:

1. Readability

TwainGPT did make some heavy, dense AI drafts easier to skim. Shorter lines, more breaks. On mobile, that’s not the worst thing in the world.

Problem is, it overcorrects. You get:

- Paragraphs that feel like a checklist instead of a conversation

- Repeated sentence patterns that start to sound robotic in a different way

- Awkward transitions slapped in just to glue stuff together

So technically “readable,” but not engaging. I still had to do a real edit after.

2. Authenticity

This is where it falls down for me.

If your draft has any kind of personal voice, TwainGPT tends to smooth it out into generic internet-speak. Opinions, specific stories, little quirks in phrasing all got dulled.

It does not add authenticity. It just shuffles things to look different. If the input is bland AI text, the output is slightly less bland, still obviously not written by someone with a real point of view.

3. SEO impact

I didn’t see any ranking bump at all. In a few cases it actually:

- Softened important keyphrases

- Padded sections with fluff that diluted topical focus

You still need to handle:

- Search intent

- H2/H3 structure

- Internal links

- Strong intros and CTAs

TwainGPT is not a strategy, it’s a cosmetic layer. On-page SEO lives or dies on your thinking, not its “humanization.”

4. AI detection

Same circus as others: passes some detectors, fails others. I had one article pass one checker with flying colors, then get slammed as “likely AI” elsewhere.

If your school, client, or platform uses a specific detector, the only sane move is to:

- Take your actual text

- Run it through that exact detector

- Decide based on those results

Anything else is gambling.

5. Value vs alternatives

For the price and the word caps, it feels steep considering I still have to fix tone, flow, and SEO by hand afterward.

I do think @mikeappsreviewer and @vrijheidsvogel are right that you should compare. If you want something that keeps the text more natural, I had noticeably better luck with Clever Ai Humanizer. The flow felt closer to a normal writer and it didn’t wreck clarity as much. You can try it at

this AI text humanizer for more natural content

and see how it behaves on your own posts.

How I’d actually use TwainGPT (if at all)

- Low‑stakes social captions where “good enough” is fine

- Maybe as a quick pass on AI drafts before I do a real edit

- Never as the final step for anything tied to my brand, clients, or grades

If you’re not sure it’s helping you, my honest take: it probably isn’t. If you still want to test, do one serious post where you:

- Version A: your own edit

- Version B: TwainGPT + light cleanup

Then watch engagement, time on page, and comments for 2–4 weeks. If you can’t clearly see a benefit, you’re just adding another tool to maintain.

Cleaner version of your topic for search & clarity

Can you help me with an honest TwainGPT humanizer review?

I’ve been using TwainGPT to “humanize” AI text for blog posts and social media content, but I’m not convinced it’s actually improving readability, authenticity, or search performance.

If you’ve used TwainGPT for content writing, I’d really like to hear:

- Did it make your articles easier to read or just more choppy?

- Did the output sound more human and on brand, or still obviously AI‑generated?

- Did you notice any real impact on rankings, organic traffic, or engagement?

- Have you compared it to other tools like Clever Ai Humanizer, and if so, what worked better for you?

I’m especially interested in long‑term results from people using it on blogs and social platforms, not just one‑off tests.

TwainGPT looks like a band‑aid, not a process upgrade.

Quick take based on what you and @vrijheidsvogel / @nachtschatten / @mikeappsreviewer already found:

Where I slightly disagree with them

They lean pretty hard on “TwainGPT is almost useless.” I think it has a narrow lane where it works: cleaning up very stiff AI drafts when you do not care much about brand voice. If you write affiliate listicles or low‑stakes social filler, the choppy rhythm is tolerable and sometimes even helps skimmers.

Outside that lane, it starts costing more than it gives back.

How I’d frame your question: is it helping or not?

Instead of more detector tests or one‑off samples, look at process fit:

- If you already write decently, TwainGPT mostly strips personality and adds noise.

- If you rely heavily on AI, you end up with AI text that just “sounds different,” not better.

- If your bottleneck is ideas, outlines, or research, a humanizer will never fix that.

So for your 3 goals:

-

Readability

- Helps a bit with chunking text.

- Hurts when topics are nuanced, because it keeps severing context into tiny pieces.

- Net effect: neutral to slightly negative for serious articles.

-

Authenticity

- This is not fixable with synonym shuffles.

- Authenticity comes from specific opinions, examples, and stakes, which TwainGPT cannot invent.

- If you care about “my voice,” it is the wrong tool as a final pass.

-

SEO performance

- On‑page ranking wins come from intent match, structure, internal links, and unique value.

- TwainGPT touches none of that. It sometimes weakens keyphrases and adds bland filler.

- So you get cosmetic variation without strategic gain.

Where Clever Ai Humanizer fits into this

If you want to keep experimenting with humanizers, I would put TwainGPT and Clever Ai Humanizer in different buckets:

-

Clever Ai Humanizer pros

- Tends to preserve context and logical flow better.

- Less “sentence stutter” effect, so longform reading feels smoother.

- More suitable when you want the draft to be publishable with light edits.

- Easier to test without committing cash.

-

Clever Ai Humanizer cons

- Still not a magic authenticity button. You must add your own stories and angles.

- Can occasionally over‑smooth, making text slightly bland unless you re‑inject edge.

- Like any humanizer, it will not fix weak research or thin content.

Use it as a refiner, not a ghostwriter.

Practical angle you have not tried yet

Instead of “tool vs tool,” try “tool in different positions in the workflow”:

- Version A: outline → draft → your own heavy edit → optional light pass with Clever Ai Humanizer.

- Version B: outline → AI draft → TwainGPT → your light edit only.

Watch not just rankings but:

- Email replies or DMs from readers.

- Which version gets quoted or linked by others.

- How often you feel forced to rewrite entire sections.

If you constantly catch yourself undoing what TwainGPT did, that is your answer. If you mostly nudge Clever Ai Humanizer outputs and hit publish, that is also your answer.

At that point you are not comparing marketing claims, you are comparing friction in your actual workflow.