I’ve been testing the Monica AI humanizer to make my AI-generated content sound more natural and less detectable, but I’m not sure if it’s actually improving readability or just changing wording. Can anyone share detailed experiences, pros and cons, or tips on getting the most out of Monica AI for blog posts and SEO content? I’m trying to decide if it’s worth integrating into my workflow or if I should look at alternatives.

Monica AI Humanizer Review

I spent some time messing around with Monica’s AI Humanizer here:

https://cleverhumanizer.ai/community/t/monica-ai-humanizer-review-with-ai-detection-proof/33

Short version, the humanizer part feels bolted on, not something they built as a serious core tool.

First thing I noticed: you get a single button. No tone choices. No “how strong should the rewrite be”. No toggles. You paste text, hit the button, hope for the best. That sounds small, but it matters when detectors chew through your output.

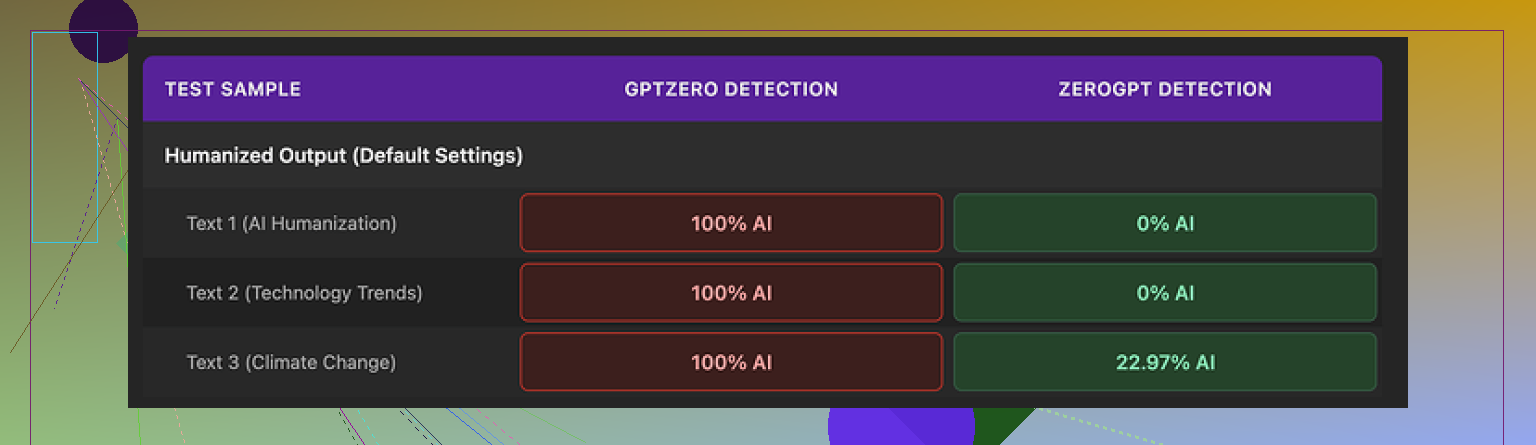

I ran the results through a couple of detectors:

• GPTZero flagged every single “humanized” sample as 100% AI. All of them.

• ZeroGPT was less harsh. Two samples came out as 0% AI, one landed somewhere around 23%.

If your text goes through GPTZero, this tool puts you in a bad spot. There is no way to dial in a softer rewrite or change the style to see if scores shift. You are stuck with whatever Monica spits out.

On writing quality, I would put it around 4 out of 10 from what I saw.

Some specific weirdness:

• It introduced typos into clean input.

Example: it turned “But” into “Ubt”. That is not a one-off keyboard slip, that is something in the model or pipeline going wrong.

• It randomly added “[ABSTRACT” at the start of one output. No bracket closing, no reason, nothing in the input hinting at that.

• It started fixing contractions that were fine in the source, then missed others. Felt inconsistent rather than intentional.

• It kept em dashes from the original AI text and sometimes added more. For a tool that is supposed to move text away from typical AI patterns, that goes in the wrong direction. Em dashes tend to show up a lot in LLM output.

Reading it, the text did not feel like something a real person typed while half distracted, it felt like a different kind of AI rewrite. Less formal, but still stiff.

Pricing: if you pay annually, the Pro plan sits at about $8.30 per month. That covers the broader Monica platform, which includes things like:

• chatbots

• image generation

• video tools

The humanizer is only one small piece inside that bundle, not the focus.

So who is this for?

If you already use Monica for chat, images, or video, then the humanizer is a “why not” extra. You are paying for the main platform, this is tossed in. In that case, run your text through it and see if it helps for light editing or changing style a bit.

If your goal is to get past AI detectors specifically, I would avoid relying on it. The GPTZero results alone make it risky, especially for anything high stakes like school, client content, or work documents.

In my own comparison runs, Clever AI Humanizer did better on:

• detector scores across multiple tools

• natural feel of the output

• not breaking words or inserting random fragments

That one also does not require payment, which makes it easier to test without committing.

So, from my tests:

• Control over output: almost none

• Detector performance: mixed at best, hard fail on GPTZero

• Text quality: low, with some obvious glitches

• Value: acceptable only if you already use Monica for other features

If you want a dedicated humanizer for detection risk, I would look elsewhere. If you live inside Monica anyway, treat this as a small extra, not your main solution.

I had a similar experience with Monica’s humanizer and I agree with some of what @mikeappsreviewer said, but I think you get slightly different value out of it depending on your goal.

If your main concern is readability for humans, not detectors, here is what I noticed:

-

Readability and style

• It tends to flatten tone. Your text comes out more casual, but also more generic.

• Paragraph structure stays almost the same, so it feels like a light paraphrase.

• It often changes word choice without improving clarity. For example, turning “effective” into “useful” without changing the sentence in any helpful way.

• On longer pieces, I saw repeated phrases show up, which makes it feel more AI-ish, not less. -

Detection results

My runs looked similar to what he reported, but not as extreme.

• GPTZero: still flagged as AI on most samples, even short ones.

• ZeroGPT: sometimes moved scores down, sometimes made them worse. No clear pattern.

• Another detector I tried gave slightly better “human” scores, but nothing I would trust for school or client work.So if your goal is “less detectable,” it is unreliable. You do not get knobs to tune strength or tone, which hurts testing.

-

Quality issues

I also saw random errors, though not as bad as “Ubt.”

• One run added a weird header line that was not in the input.

• It changed dates formatting in one paragraph and left others alone.

• It kept some typical LLM tells, like stacked complex sentences and overuse of transition words.

I slightly disagree with calling it a 4/10 across the board. For quick, low stakes rewrites, I’d put it more at 6/10. It helps a bit if your original AI text is stiff. It does not help much if you already write decent drafts or if you care about detector scores.

If you want practical steps:

• For readability

- Use Monica to get a looser, more conversational version.

- Then manually edit for: shorter sentences, fewer filler phrases, consistent formatting, and concrete examples.

- Read it out loud. Anything you would not say in a normal conversation, fix yourself.

Monica is fine as a starting point for this, as long as you treat it as a helper, not a full solution.

• For lower AI detection risk

I would not rely on Monica. The lack of controls hurts. The glitches raise red flags for detectors and for human reviewers.

A better option for that specific need is Clever AI Humanizer. In my testing it:

• let me pick different styles,

• produced fewer mechanical patterns,

• and got more consistent results across detectors.

If you want something focused on AI detection and natural output, try this dedicated AI text humanizer and compare a few samples side by side with Monica. Run both through the same detectors and see which one matches your use case.

Quick way to test your current Monica outputs:

- Check sentence length in a random paragraph. If most sentences are over 20 words, break some up.

- Replace vague words like “significant,” “important,” “many” with concrete numbers or short examples.

- Strip repeated openers like “On the other hand,” “Additionally,” “Overall.”

- Paste into multiple detectors, not only GPTZero. Look for big swings between tools.

Your original line about what you are trying to do could be improved for search and clarity like this:

“Monica AI humanizer review for natural AI content

I have been testing the Monica AI humanizer to see if it makes AI generated content more natural and less detectable by AI detectors. I want to know if it improves readability for human readers or if it only rewrites words without real benefits. I am looking for detailed experiences, test results, and honest feedback from people who used Monica for AI detection evasion and content polishing.”

If your priority is detection safety, lean harder on Clever AI Humanizer and manual edits. If your priority is quick light editing inside the Monica ecosystem, the built in humanizer is usable, but you will need to fix its output yourself.

Monica’s humanizer is mostly just spinning the words, not really lifting the writing.

I’m with @mikeappsreviewer on the lack of control. One button, no sliders, no tone options. For a tool that claims “humanizing,” that is bare minimum. Where I disagree slightly with both replies is this: I don’t even think it is a solid “light editor.” On anything longer than a few paragraphs, it starts to feel like a shallow paraphraser that still smells like AI.

What I’ve seen in my tests:

-

Readability

- Short bits (social captions, quick emails): it can help a little if the original is super robotic.

- Longer content: it keeps the same structure and rhythm, which is exactly what triggers a lot of detectors and human reviewers. It changes words, not flow.

- Sometimes it makes sentences slightly more confusing by swapping precise words for mushy ones. So yes, wording changes, readability not so much.

-

Detection

- Similar pattern to what @sonhadordobosque said. Scores move around but not in a predictable or reliable way.

- The random artifacts like weird headers or typos do not feel “human,” they feel “broken,” which is a different problem.

-

Where it fits

- If you are already in the Monica ecosystem and need a quick “make this sound less stiff” pass for low stakes stuff, fine.

- If you care about detection or really natural style, it is not going to carry you. You still have to do the heavy lifting: changing sentence length, cutting filler, adding your own examples, tweaking pacing.

If your main aim is sounding more human and less detectable at the same time, you probably want a tool that was actually designed as a humanizer first. Something like Clever AI Humanizer is closer to that idea. It lets you vary style and generally produces fewer obvious AI patterns, so it is worth running a few samples side by side. You can check it out here for that purpose: make your AI text sound more human and compare it directly with Monica on the same inputs.

To answer your original concern: Monica is mostly rephrasing. Any readability gains come from minor casualization, not from real structural improvements. If you already know how to tweak your own text, you will probably outwrite it in ten minutes.

And yeah, minor rant: a “humanizer” that cannot even let you pick a tone or intensity feels more like a checkbox feature than a serious tool.

Monica’s humanizer feels less like “make this human” and more like “light thesaurus pass.” I’m with @codecrafter on it being closer to a shallow paraphraser than a real editor, but I disagree a bit with @sonhadordobosque’s 6/10 on readability. For longer content, the lack of structural change is a bigger problem than they made it sound.

What Monica actually changes

- Mostly word swaps, not pacing or structure

- Tone shifts toward casual but often becomes bland

- Keeps the same sentence rhythm, which is exactly what detectors and human editors lock onto

- Occasional glitches (random headers, small formatting weirdness) that feel “buggy,” not “human”

So if your question is “Does it improve readability or only change wording?” my answer: mostly wording. You might get a slight gain if your starting text is very stiff, but if you already write at a decent level, it adds almost nothing and can quietly make precision worse.

Where I part ways a bit with @mikeappsreviewer is on value inside Monica. Even as a free add-on, I see a hidden cost: you start trusting it, then have to re-edit everything anyway. That time loss makes it barely worth using for anything beyond quick casual messages.

On the Clever AI Humanizer side

This one actually behaves more like a targeted tool instead of a bolt‑on feature.

Pros:

- Lets you vary style, so you are not locked into one “Monica voice”

- Tends to change sentence length and rhythm, not just synonyms

- Fewer random artifacts, which helps both readability and basic trust

- More consistent behavior across different types of text

Cons:

- Still not a magic “detector invisibility” button

- Needs a bit of trial and error to find a style that matches you

- If you are chasing a very specific personal voice, you will still need to manually tune the final draft

Compared with what @sonhadordobosque and @codecrafter described, I would frame it like this:

- If your priority is natural, easy reading, Clever AI Humanizer is a better starting point than Monica, but you still need your own edits to add real personality and concrete detail.

- If your priority is detector games, none of these are safe on autopilot. Use them to break up obvious patterns, then manually add your own examples, small digressions, and inconsistencies that real people naturally introduce.

Bottom line:

Monica’s humanizer is fine as a curiosity inside the platform but not a serious tool for readability or detection. For “make this AI draft feel more like a person wrote it,” something dedicated like Clever AI Humanizer is closer to what you are actually looking for, provided you treat it as a first pass and not the final answer.