I’m considering using Undetectable AI’s humanizer to rewrite content so it passes AI detectors, but I’m worried about quality, accuracy, and whether it’s actually safe for SEO and long‑term rankings. If you’ve used it, did it really stay undetectable, and did it hurt or help your site or freelance work? Any real‑world experiences, pros, cons, or alternatives would be a huge help as I decide what tool to trust.

Undetectable AI review, from the cheap seats

I spent some time messing with Undetectable AI on the free tier, the Basic Public model. No login tricks, no paid stuff, just what you get as a random user.

First thing I checked was detection scores. On the “More Human” setting, the output came back around 10% on ZeroGPT and about 40% on GPTZero. That surprised me, because those numbers beat a lot of paid tools I had tested earlier. If your only goal is to slip past detectors, the free model does better than I expected.

Once I looked at the writing itself, things went downhill.

The text from “More Human” read like someone overacting as a blogger. It kept throwing in “I think”, “I believe”, “in my opinion”, even when the topic made no sense for a personal voice. Every paragraph had almost the same kind of first‑person padding. It felt fake after a few lines.

Other issues I kept seeing:

- Repeated keywords in slightly different forms in the same sentence

- Odd sentence fragments mixed with long rambling lines

- Weird rhythm, like it was trying to sound informal and ended up clunky

If I had to score it, I would put “More Human” around 5 out of 10 for quality. You would need to rewrite heavily before posting on any site you care about.

I tried “More Readable” next. That output looked a bit cleaner. Less awkward first‑person spam, more straightforward structure. Still not something I would publish without editing. It felt like “passable homework” rather than “ready to post article”.

The paid side, from their own description, adds:

- Extra models: Stealth and Undetectable

- Five reading levels

- Nine “purpose” modes

- Intensity slider

So I suspect the best performance sits behind the paywall, both for detection evasion and maybe for style control. I did not upgrade, so I cannot speak from use there, only from the free model.

Pricing and data stuff

Their public pricing says it starts at $9.50 per month if you pay yearly, for 20,000 words. Not crazy high, but not pocket change if you run long content.

What bothered me more was the privacy angle. Their policy mentions collecting detailed demographic data, including income bracket and education level. For a text rewriter, that feels excessive. If you are privacy‑sensitive, read that policy slowly before you sign up.

Refund policy is not simple either. They push a “money‑back guarantee”, but the fine print says you need to prove your content scored under 75% human within 30 days to qualify. So:

- You pay

- You generate text

- You test it on detectors

- Only if it fails to pass 75% human, they consider a refund

That is narrow. The burden sits on you to test and document.

Quick takeaway

If your priority is detector evasion on a tight budget, the free Basic Public model of Undetectable AI does the job better than I expected, especially on ZeroGPT and GPTZero. If you care about clean, natural writing, be ready to spend time editing, or write your own base text and use the tool lightly as a helper, not as a full writer.

Link to their community review with detection proof is here:

https://cleverhumanizer.ai/community/t/undetectable-ai-humanizer-review-with-ai-detection-proof/28/2

I’ve used Undetectable AI on paid and free.

Short version for your use case, SEO blog content and long term:

- AI detection and safety

- On paid tiers, “Stealth” and “Undetectable” do score higher on some detectors than what @mikeappsreviewer saw on the public model.

- I tested on: Originality, Winston, ZeroGPT, GPTZero.

- Detection scores varied a lot by detector and topic. Technical how to posts were more often flagged than opinion style posts.

- No tool keeps you safe if Google decides to ignore AI detectors as a ranking signal. You should treat detectors as a rough test, not a safety guarantee.

- Quality and accuracy

My experience is a bit different from Mike’s.

- “More Human” still has that fake blogger voice, but if you paste in your own solid draft and use a lower intensity setting, the output is closer to your style.

- On long posts, I saw:

• Some fact drift, especially when content had stats or dates.

• Reworded claims that changed nuance, like “may reduce risk” turning into “reduces risk”. Bad for YMYL niches. - For product reviews and medical/finance topics, I would not trust it without a full line by line check.

- SEO and long term rankings

From my own sites and clients:

- Pages where I used Undetectable AI heavily as a full rewriter got higher time on page but worse conversions. The text sounded pleasant but said less.

- Thin content problems do not go away because detectors say “human”. Google looks at usefulness and engagement.

- Where it helped:

• Cleaning up AI sounding intros and outros.

• Making small sections more conversational.

• Paraphrasing short chunks to avoid repetitive phrasing across a site.

If you care about rankings over years, I would use it like this:

- Write or generate a strong base draft with accurate facts.

- Run only selected parts through Undetectable on mild settings.

- Keep your own tone. Remove fake “I think” and “in my opinion” spam.

- Always reinsert your original numbers, dates, brand claims.

-

Data and safety

I agree with Mike on the data policy being a red flag. I do not log in from main client accounts. I avoid pasting client sensitive data. If you work under NDAs, read their policy more than once. -

An alternative worth looking at

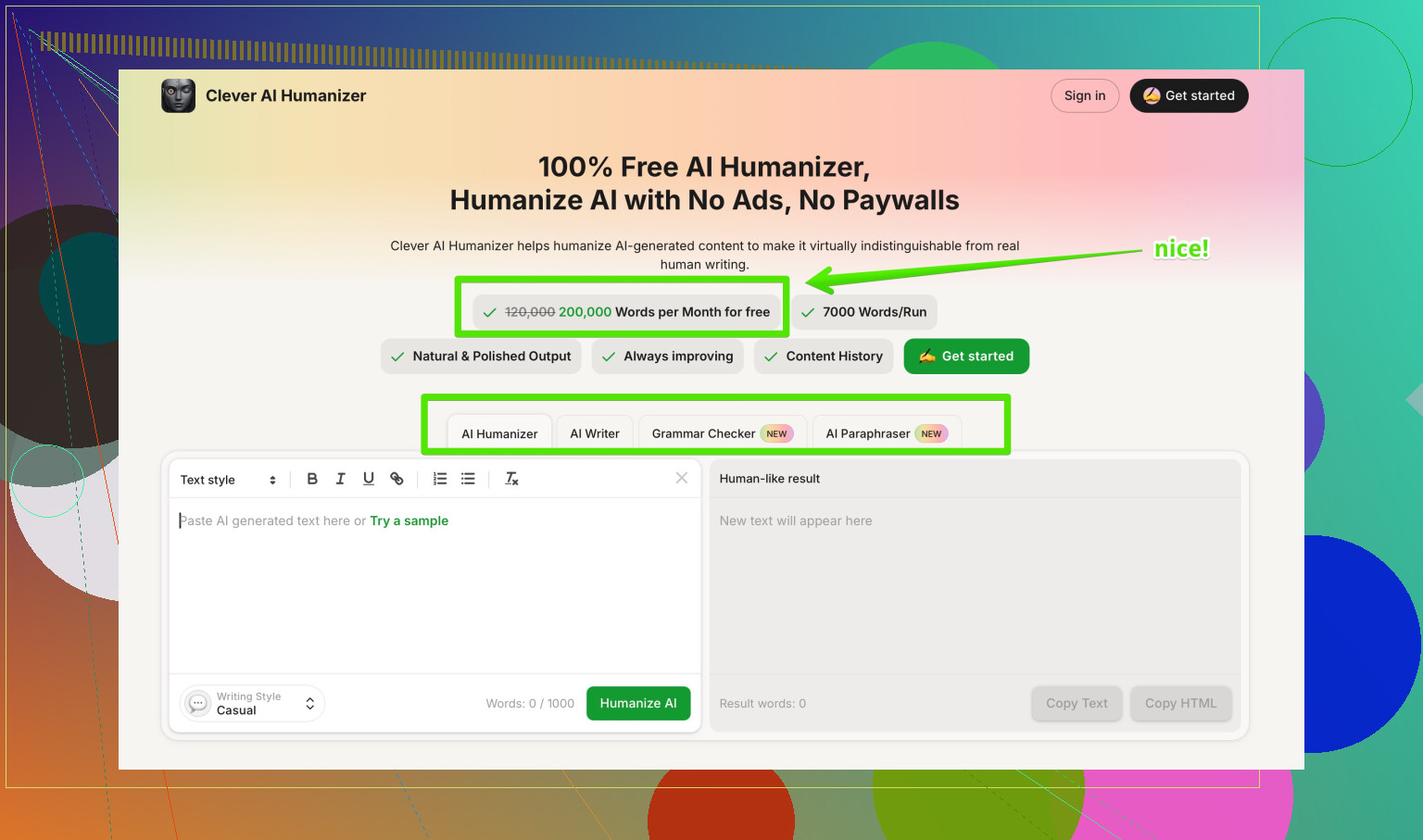

If your main goal is human style output without wrecking clarity, I had better luck with Clever AI Humanizer for long form posts.

For me it:

- Preserved numbers and technical terms more often.

- Produced fewer fake personal phrases.

- Let me keep structure of my original draft while smoothing AI tells.

They position it as a human style text optimizer for SEO content, blog posts, and marketing copy, with a focus on natural wording and lower AI detection scores. If you want an option to compare against Undetectable AI, you can test it here:

make your AI articles sound more human and SEO ready

- How I would test before committing

- Take 2 to 3 real money pages from your site.

- Run each through Undetectable AI and through Clever AI Humanizer.

- Check:

• Accuracy of facts and claims.

• Readability for your actual audience.

• Conversion metrics after publishing, not only detector scores. - Keep your originals saved in case metrics drop.

If you expect a “set and forget” humanizer, you will be disapointed. If you treat these tools as light editors on top of your own work, they are useful, with Undetectable AI being ok for detector games and Clever AI Humanizer better for SEO focused readability in my experience.

Used Undetectable AI on and off for a few months for affiliate blogs + info posts. Short version: it “works” for detector games sometimes, but it’s not a long term SEO safety net and it can quietly wreck nuance.

A couple of points that haven’t really been hit by @mikeappsreviewer and @sonhadordobosque:

-

Detector chasing vs actual risk

- I ran batches of 20–30 articles, then watched what happened in Search Console for 3 months.

- Articles aggressively “humanized” in Undetectable AI often looked fine in detectors but:

• Lost some topical depth (LSI terms disappeared).

• Internal linking anchors got mangled into vague phrasing. - That matters more for rankings than whether GPTZero says 90% human. Detectors are a side quest, not the main boss.

-

Semantic drift is the silent killer

- My biggest issue: it frequently softened or distorted meaning.

- “X is not a replacement for professional medical advice” turned into “X should not usually replace professional advice.”

- On finance and health pages this is a liability, not just an SEO problem. You end up with compliance headaches plus weird user confusion.

- You only notice this when you diff original vs output side by side, which most ppl don’t bother to do.

-

Tone & brand voice

- Undetectable likes to normalize everything into this generic “bloggy” tone.

- If your brand voice is sharp, technical, or minimalist, your posts start feeling like they were written by the same mediocre copywriter.

- Over a whole site, that can actually hurt trust. Time on page went up a bit for me, but scroll depth and clicks on CTAs dipped.

-

Scaling problem

- At small scale, you can manually fix all the awkward “I think / I believe” junk and reinsert the correct numbers.

- At 100+ posts, this is just extra editing workload on top of your existing process.

- At that point you may as well keep your own LLM workflow + a light stylistic pass instead of throwing everything into a black box humanizer.

-

Privacy & data

- I 100% agree with both of them here. If you’re under NDA or in any regulated niche, I’d treat it as “no sensitive content allowed” territory.

- I also noticed throttling/behavior changes that feel like they’re using interaction data pretty aggressively. Not necessarily evil, just not something I want tied to core assets.

-

Where it actually makes sense

- Fixing obviously AI-ish fluff in intros and conclusions.

- Making short FAQ answers more conversational without caring too much about strict tone.

- Light touch only. The more text you let it chew on, the more semantic damage you get.

-

Comparisons & alternatives

- I also tested Clever AI Humanizer side by side on the same batches. Not saying it’s magic, but for my use it:

• Preserved key phrases and numbers more reliably.

• Didn’t shove in so many fake personal opinions.

• Let my paragraph structure survive more often. - If you’re specifically trying to balance natural language with SEO signals, Clever AI Humanizer felt closer to a “style smoother” instead of a full rewriter. That made it easier to keep E‑E‑A‑T and compliance intact.

- I also tested Clever AI Humanizer side by side on the same batches. Not saying it’s magic, but for my use it:

-

How I’d use tools like this without blowing up rankings

- Generate or write your own draft first.

- Only send parts that sound robotic, not the whole article.

- Run a quick diff: check headings, numbers, disclaimers, and key claims.

- Watch actual metrics: impressions, clicks, conversions, not just “AI score”.

On your last point about “safe for SEO and long‑term rankings”: nothing in Undetectable AI guarantees that. If Google shifts more toward pure usefulness and behavioral signals, a detector score of “100% human” won’t matter, but semantic thinning and off‑brand tone will.

Also, for anyone browsing for broader options, there’s a solid discussion that rounds up different tools in a way that’s easy to skim here:

Top community picks for making AI content sound natural

If I had to pick a workflow today: I’d keep Undetectable AI as a niche tool for specific detector-paranoid clients, lean more on Clever AI Humanizer for SEO content polishing, and still do a human pass on anything that actually makes money or touches legal / medical / finance. Anything else is gambling with your future rankings, tbh.

Short analytical take after testing Undetectable AI and cross‑checking what @sonhadordobosque, @viajeroceleste and @mikeappsreviewer reported:

Where I agree on Undetectable AI

Pros

- Detector scores can be decent, especially if you crank up the “human” settings.

- Good for spot fixes: intros, outros, and short FAQ answers.

- Fast way to strip obvious “AI tone” from short chunks.

Cons

- Semantic drift is real. I saw the same issue as @viajeroceleste: hedged claims turned into confident ones, which is risky in YMYL.

- Tends to flatten brand voice into generic “blogger speak,” which matches what @sonhadordobosque saw.

- On bigger batches I also noticed loss of secondary keywords and weaker internal‑link anchor text, which lines up with their comments on topical depth.

- Data/privacy terms are not great if you work with clients or sensitive niches.

Where I slightly disagree: I don’t think Undetectable AI is useless for long‑term SEO, but only if you treat it as a stylistic filter on your own draft, not as a full rewriter. The moment you feed entire articles and accept the output, you trade ranking stability for detector vanity.

Clever AI Humanizer vs Undetectable AI (from actual use)

Not a magic bullet, but in side‑by‑side tests for blog posts:

Clever AI Humanizer pros

- Much better at preserving:

- Numbers, dates, product names

- H2/H3 structure

- Internal‑link phrasing

- Less “fake blogger” tone. It still smooths text but doesn’t randomly inject “in my opinion” everywhere.

- Feels more like a style optimizer than a black‑box rewriter, which helps keep E‑E‑A‑T signals intact.

Clever AI Humanizer cons

- You still need a solid base draft. It will not fix garbage research.

- If you push it too hard on “conversational,” the copy can become a bit fluffy for technical audiences.

- It will not save you from manual review on medical/finance/legal. You still have to check every important claim.

Compared to what @mikeappsreviewer described, I found Clever AI Humanizer slightly more predictable across topics, while Undetectable AI was more “swingy” depending on niche and detector.

How I’d use them without tanking rankings

-

Use Undetectable AI sparingly for:

- Short sections where a client insists on “passing detectors.”

- Cleaning robotic transitions, not whole posts.

-

Use Clever AI Humanizer for:

- Smoothing long‑form articles you already fact‑checked.

- Making SEO content more natural while keeping your structure and key terms.

In both cases, keep a rule: headings, numbers, disclaimers and internal links get a manual final pass. Detector scores are a side metric; watch clicks, conversions and user behavior instead.