I’ve been considering using WriteHuman AI for my content writing, but I’m unsure if it’s really worth it. I’ve seen mixed opinions online and don’t know what to trust. Can anyone share their real experiences, pros and cons, and whether it’s actually helpful for consistent, human-sounding content?

WriteHuman AI review, from someone who paid for it and poked it way too much

I tried WriteHuman after seeing them name GPTZero directly in their pitch. That hooked me, because most of these tools talk in vague terms and avoid naming detectors.

So I did the boring part. I took three different chunks of AI text and ran them through WriteHuman, then sent the outputs to:

- GPTZero

- ZeroGPT

Results were not what I expected.

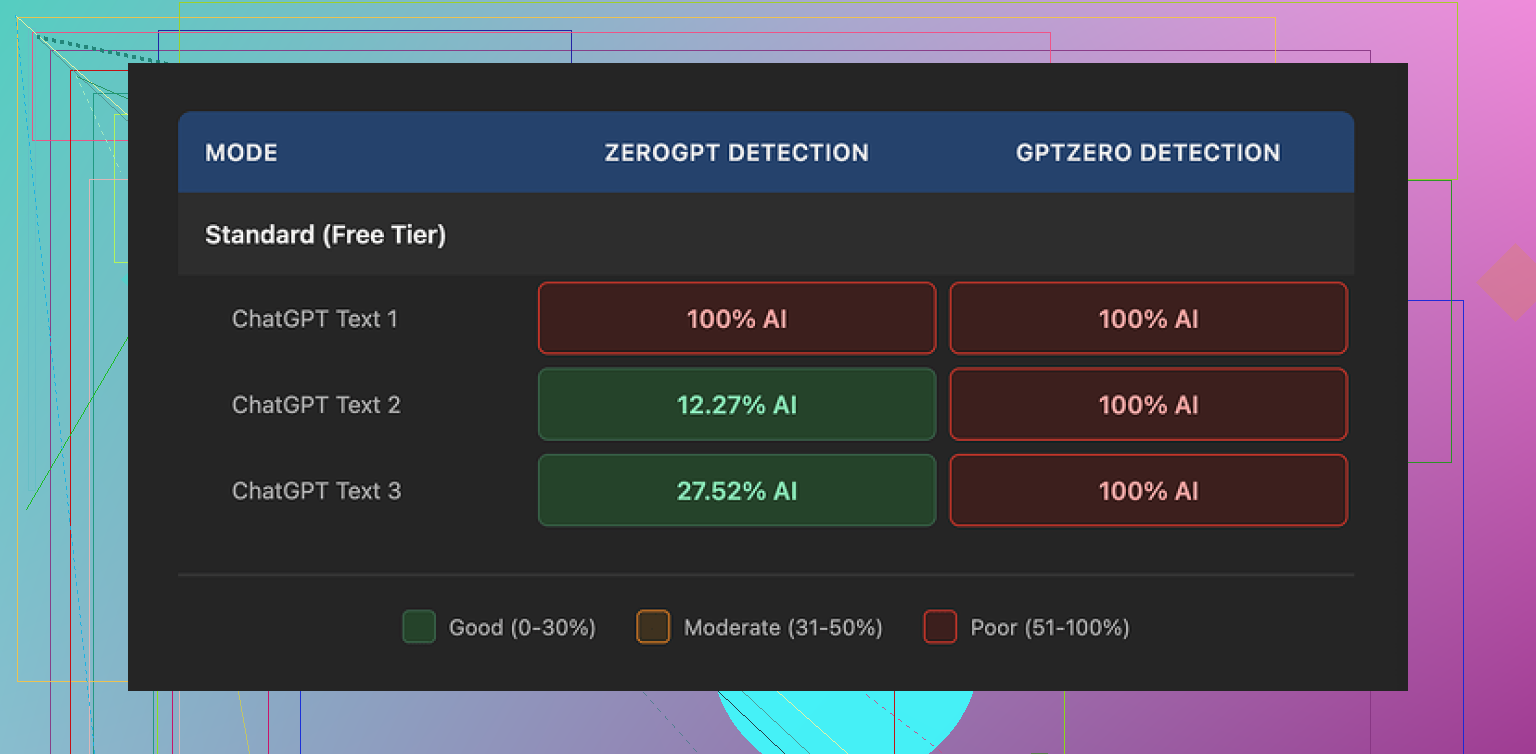

GPTZero results

Even though WriteHuman markets itself as tested against GPTZero, all three of my outputs came back as 100% AI on GPTZero.

Not high. Not mixed. Straight 100% on every sample.

These were different source texts too. One technical explanation, one casual blog-style piece, one more academic style. All three flagged as AI after ‘humanization.’

ZeroGPT results

ZeroGPT was all over the place:

- First sample: 100% AI

- Second sample: about 12% AI

- Third sample: around 28% AI

So the same tool, same settings, similar length inputs, totally different readings. That lines up with what I have seen from detectors in general. They swing.

Still, if a tool hypes itself as detector-safe, you expect at least some consistent shift down, not one sample nailed at 100% and others dipping randomly.

Text quality and weird quirks

The writing itself felt off in a few ways.

- Tone jumps: In one output, the first two paragraphs sounded like a tired corporate blog. Later paragraphs suddenly switched to almost chatty Reddit voice. It looked stitched together.

- Style drift: Some sentences were dense and formal, then out of nowhere a short, blunt line appeared. That sort of variation can help confuse detectors, but when you read it as a person, it feels like multiple authors, or a bad rewrite job.

- Typos: I got at least one obvious typo, ‘shfits’ instead of ‘shifts.’ I did not see that in the original AI text, so it came from their system.

That typo and the random tone changes might help nudge detectors off, but they also make the content harder to use if you need it to look like something you would send to a boss, professor, or client.

Here is one of the screenshots they show:

Pricing and plans

Their pricing felt high for what I saw.

- Basic plan: starts at $12 per month on annual billing

- That tier gives 80 requests

- All paid plans unlock an ‘Enhanced Model’ and more tone options

So your trade:

You pay monthly, you get more control over tone and an upgraded model that they say is better. You still get no guarantee that any detector will clear it.

Important policy notes

Two things in their terms made me pause more than the detection results.

-

No guarantee on detector bypass

They openly say they cannot promise you will bypass any detector. To be fair, no one serious can promise that, but it clashes with the marketing that leans on GPTZero by name. -

No refunds

If it fails to reduce your detection score, you have no way to get your money back. Once you pay, that is it. -

Training use of your text

Your submitted text is licensed for AI training. If you are feeding it proprietary work stuff, student assignments, or client docs, you should stop and think about that.

If you do not like that use of your text, your only real option is to skip the tool.

Comparison with Clever AI Humanizer

I tested Clever AI Humanizer in the same session, with similar kinds of input.

Reference link:

My experience there:

- Better detector scores on the same style of inputs

- No paywall for basic use at the time I tried it

- More consistent tone, fewer random swings, no obvious typos in my tests

Not saying it is perfect, but for my use case, it did a better job at lowering AI flags without wrecking readability.

Who this is for (in my opinion)

WriteHuman might still make sense for you if:

- You do not mind your text being used for training

- You are okay with paying monthly even though there is no refund if it fails

- You want more tone sliders to play with and do not mind fixing awkward outputs by hand

If you need:

- Reliable detector drops on GPTZero

- Stable tone

- Strong privacy around your input text

- Clear recourse when a tool does nothing for you

Then this one did not pass my tests. I ended up sticking with Clever AI Humanizer for now and doing a manual edit pass myself instead of trusting any ‘one click’ fix.

I tried WriteHuman for about a week on client blog posts and a couple of school-style essays. Mixed bag.

Pros I saw:

- Output is not total garbage. For simple blog content it looked ok on a skim.

- The tone sliders help if you want to move stuff from “chatbot-y” to more casual or more formal.

- For some pieces, ZeroGPT scores dropped a bit, similar to what @mikeappsreviewer saw. I had a 90% AI text drop to around 20–30% on ZeroGPT on a few tests.

Cons:

- GPTZero basically laughed at it. Most of my “humanized” outputs stayed 90–100% AI there.

- Tone consistency was rough. First paragraph sounded like a business blog, next looked like a comment thread. Needed manual editing to not look weird.

- I saw random typos and odd word choices that were not in the original AI text. Fixable, but it takes time.

- Pricing feels steep if you care about detector scores. You pay monthly, no refunds, no guarantee on detection, and your text goes into their training. For client or school work I don’t like that at all.

If your goal is:

- light paraphrasing

- making AI text feel less “chatbot” before you do your own heavy edit

then it is ok as a helper.

If your goal is:

- “I need this to pass GPTZero or similar on its own”

- “I need strong privacy for assignments or client docs”

then I would not rely on it.

I had better luck pairing regular AI output with manual edits. Change sentence lengths, add specific details from your own experience, delete fluff, and tweak word choice. That dropped detector scores more than WriteHuman in a lot of cases.

If you want a dedicated humanizer, Clever AI Humanizer worked a bit better for me on detectors and kept tone more stable. Still not magic, but for “SEO-friendly” content and blog posts it felt more usable, especially when I ran the same text through multiple tools.

So, if you are tight on budget or worried about your text being logged, I’d skip WriteHuman or at least month-to-month and test hard before you commit.

I’m kinda in the same camp as @mikeappsreviewer and @espritlibre on this, but with a slightly different takeaway.

Short version: WriteHuman is “okay-ish” as a stylistic rewritter, not great as an “AI detector bypass” tool, and kinda overpriced for what it actually delivers.

Pros I actually noticed:

- It can make stiff AI text read a bit more relaxed or more formal, depending on the sliders.

- For casual blog content, on a quick skim, it doesn’t look terrible. If you’re not super picky, it’s usable.

- On some detectors (like ZeroGPT), I did see scores drop sometimes, just not reliably or in a way I’d want to bet anything important on.

Cons that matter more in real life:

- If your main goal is “this needs to pass GPTZero,” it’s not doing miracles. Same story others saw: GPTZero still flags a ton of the “humanized” stuff as AI.

- Tone is messy. Paragraph 1 feels corporate, paragraph 2 feels like Reddit, paragraph 3 looks like a school essay. You can fix it, but then… why pay for a magic button if you still have to hand-edit half of it?

- Little typos and awkward phrasing pop up that weren’t in the original. One or two are fine, but over many articles it gets annoying and costs you time.

- No refunds + no detector guarantee + your text can be used for training is a rough combo if you work with client docs, school work, or anything sensitive.

Where I slightly disagree with some of the other takes:

If you’re just cranking out low-stakes niche blog posts, product roundups, or filler content where you don’t care much about privacy or a perfectly smooth voice, then WriteHuman isn’t totally useless. It’s a decent “rough pass” tool. I’ve used it to knock the obvious chatbot tone off drafts, then gone in and added my own examples and personal bits. That workflow was… acceptable.

But if your priorities are:

- Consistent tone

- Better odds on multiple AI detectors

- Less risk of your text getting logged for training

- Not paying for something you might drop in a week

then I’d honestly look at something like Clever AI Humanizer instead. When I tested both, Clever AI Humanizer gave me:

- More stable voice across the whole piece

- Better average drops in AI detection scores

- Outputs that needed less cleanup for client-facing stuff

It’s still not “click once and fool everything,” but paired with your own edits (change sentence lengths, add real details, cut fluff, inject your actual voice) it felt closer to what people think WriteHuman is doing.

So:

- If you’re curious and have spare cash, treat WriteHuman as a convenience paraphraser, not a detector shield.

- If you’re tight on budget or dealing with anything important, I’d skip locking into their subscription and either use Clever AI Humanizer plus manual editing, or just manually rework your AI draft yourself.

TL;DR: Mixed bag, leaning toward “not worth it” unless your use case is very low-stakes and you just want a quick stylistic shake-up.